Release monitoring for ecommerce: The post-deploy blind spot

Ecommerce release monitoring is the practice of automatically connecting every code deployment to changes in site stability, performance, and customer behaviour — so teams know exactly what a release did to their ecommerce experience within hours, not days. Without it, most teams are shipping code and hoping nothing breaks, then finding out from customers when it does.

That gap — between deploying code and understanding its impact — is one of the most expensive blind spots in ecommerce.

You shipped. Now what?

Here is what most ecommerce releases look like from the inside: engineering merges a feature branch, QA signs off on staging, the deployment goes out, and everyone moves on to the next sprint.

What happens on the live site? Nobody knows. Not right away, anyway.

Maybe conversion dips 3% on mobile checkout. Maybe a third-party script conflict introduces a new JavaScript error on PDPs. Maybe the new promo banner element is pushing LCP past the 2.5-second threshold on product listing pages.

These things don't announce themselves. They accumulate quietly. And by the time someone notices — through a customer complaint, a support ticket, or a weekly analytics review — the damage is already done.

Why traditional monitoring misses the release-to-revenue connection

Engineering teams are not short on monitoring tools. Sentry tracks errors. Datadog tracks infrastructure. Google Analytics tracks traffic. The problem is not a lack of data. It is a lack of connection between that data and the deployment that caused it.

Here is what that looks like in practice:

Error monitoring without release context: Your APM tool shows a spike in JavaScript errors on Friday afternoon. Was it the Thursday deploy? The new A/B test? A third-party script update? Without release tagging, engineering spends hours correlating timelines manually — or worse, opens a war room to triage something that could have been flagged automatically.

Performance monitoring without ecommerce framing: Your RUM tool shows LCP degraded by 400ms on mobile. But it cannot tell you that the degradation is isolated to checkout pages, that it started 90 minutes after your last deploy, or that it correlates with a 2.1% drop in checkout completion rate. Without ecommerce-specific context, performance data is noise.

Analytics without deployment awareness: Your BI dashboards show conversion dipped this week. But those dashboards have no concept of deployments. Product teams are left guessing whether the dip is seasonal, traffic-related, or caused by something their own engineering team introduced.

The core issue is fragmentation. Error data lives in one tool. Performance data lives in another. Conversion data lives in a third. And none of them are aware that a release happened at all.

What ecommerce release monitoring actually looks like

Ecommerce release monitoring closes the gap by treating every deployment as a measurable event — and automatically connecting it to the signals that matter: error rates, page performance, session behaviour, and funnel conversion.

Here is what that looks like when done well:

1. Automatic release tagging via CI/CD integration

When your pipeline deploys, the monitoring platform registers the release with a timestamp, version, and environment tag. No manual annotation. No Slack messages that say "just deployed v2.14.3." The system knows.

2. Pre/post comparison across ecommerce signals

Within minutes of a deploy, the platform compares error rates, performance metrics (LCP, INP, CLS), and funnel behaviour before and after the release. If checkout errors spike, if PDP load times degrade, if cart abandonment increases — it is flagged in the context of the specific release that caused it.

3. Regression detection with revenue impact

This is where generic tools stop and ecommerce-specific monitoring starts. A regression is not just "error count went up." A regression is "this new JavaScript error is firing on 12% of checkout sessions and is correlated with $47,000 in estimated monthly revenue at risk." That framing changes how fast engineering acts.

4. Release validation and win confirmation

Release monitoring is not only about catching problems. It is about confirming wins. When a bug fix deploys and the associated error disappears, the platform validates the resolution. When a performance optimization ships and LCP improves by 300ms on PDPs, the data is there — tied to the specific deploy — for product teams to document the impact.

The cost of learning about regressions from customers

Every team has a version of this story: a release goes out on Thursday. On Friday, customer support starts getting tickets about checkout failures. By Monday morning, engineering is in a war room trying to reproduce the issue while the VP of Ecommerce asks how much revenue was lost over the weekend.

That timeline — deploy Thursday, detect Monday — is not unusual. It is the norm for teams without release monitoring.

"The biggest unlock for my dev team is to be able to detect regressions before they become an issue. When we release code, we know instantly if we've introduced a regression to the site, which is really powerful for us to detect the health of our business."

— Matt Ezyk, Senior Director of Engineering Ecommerce at Hanna Andersson

The financial cost compounds. A checkout error introduced on a Thursday deployment that goes undetected until Monday does not just affect weekend revenue. It erodes trust. It creates support load. It pulls engineering off roadmap work and into reactive firefighting. And it raises the stakes on every future deploy — making teams more cautious, slower to ship, and less willing to iterate.

When we do a release, we really count on Noibu. We know that if there's an issue, Noibu will surface it just like a customer would. We can address it immediately."

— Yannick Vial, Sr. VP of Digital Development & Unified Commerce at La Maisons Simons

This is the real cost of release blind spots: not just the revenue lost from a single regression, but the cultural drag that accumulates when teams cannot trust their own deployments.

What engineering leads and product owners need from release monitoring

Engineering leads and product owners have different relationships with releases — but they share the same gap.

Engineering needs to know: Did this deploy introduce new errors? Did it degrade performance? Is there a regression I need to roll back or hotfix? And critically: can I reproduce and resolve the issue with full session context, stack traces, and environment data — without spending hours on manual triage?

Product needs to know: Did this release move the metrics we expected? Is the conversion improvement from the checkout redesign showing up in real session data? Did the experiment we shipped create any unexpected friction? And can I demonstrate the impact to leadership with data, not anecdotes?

"We use insights in Noibu's platform to better plan for future releases. So it's not just obviously working on the issues on hand but planning for the future and ensuring that the types of issues that we're seeing on Noibu are not reoccurring."

— Anique Ahmed, Manager of Digital Platform Health & Data Management at Giant Tiger

Both roles need a system that automatically connects deployments to outcomes — without requiring anyone to stitch together data from four different tools after the fact.

How Noibu approaches release monitoring for ecommerce

Noibu's Release Monitoring product line is built specifically for this problem. It connects your CI/CD pipeline directly to Noibu's ecommerce analytics and monitoring platform — so every deployment is automatically tracked against changes in stability, performance, and customer behaviour.

Here is what that means in practice:

CI/CD integration registers every release automatically. No manual tagging. No "did anyone log the deploy?" conversations. Every release is timestamped and linked to the signals that follow.

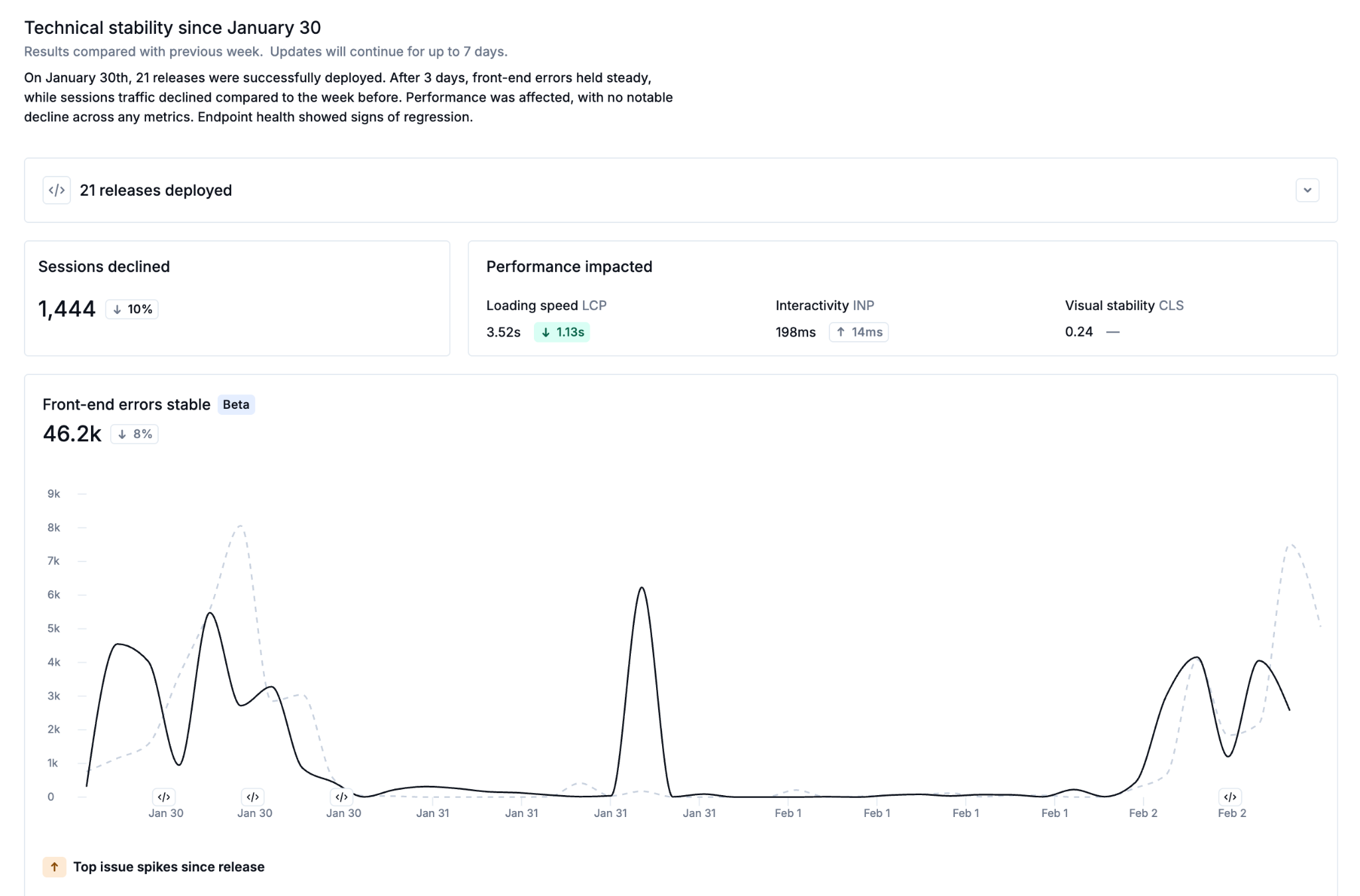

Pre/post release comparison is immediate. Within minutes of a deploy, Noibu surfaces changes in error rates, Core Web Vitals (LCP, INP, CLS), and session behaviour — compared against the pre-release baseline. Regressions are flagged. Improvements are confirmed.

Regressions are tied to revenue impact. This is the difference between ecommerce release monitoring and generic APM. When Noibu detects a regression, it shows the estimated revenue at risk — not just the error count. Engineering and product teams can prioritize rollbacks and hotfixes based on business impact, not guesswork.

Session context is attached to every regression. When a release introduces a new issue, Noibu links it to full session replays — rage clicks, funnel stage, device, browser, the complete customer experience. Engineering can reproduce and resolve without asking support for reproduction steps.

Validation closes the loop. When a fix ships, Noibu confirms it. The error rate drops, the performance metric recovers, the sessions are clean. Product teams can report the win with confidence.

"The impact of Noibu is that we're not blind anymore about the bugs we have. I'm a lot more confident when we release a new version of the site. We know which device the user is on, what they're navigating, what the customer is seeing, and when and where they're seeing bugs."

— Sébastien Ribeil, Head of Digital Factory at ETAM Group

The before and after: release workflows without and with ecommerce release monitoring

Five signs your ecommerce team needs release monitoring

Not every team realizes they have a release visibility gap. The symptoms often look like other problems — "our analytics are noisy," "our engineers are reactive," "we're slow to ship." Here are the patterns that point directly to a release monitoring gap:

You learn about post-deploy issues from customers, not your tools. If the first signal of a regression is a support ticket or a Slack message from the CX team, your monitoring is not connected to your deployment pipeline.

Engineering spends hours correlating error spikes to deployments. If your team is manually checking timestamps across Sentry, Datadog, and Google Analytics to figure out which release caused an issue, you are paying an engineer's time to do what a CI/CD integration should automate.

Product teams cannot prove whether a release improved conversion. If the only way to measure a feature's impact is a before-and-after comparison in Google Analytics — with no awareness of what else shipped that week — product is operating on faith, not data.

You deploy less often than you should because the risk feels too high. Fear of breaking the site slows release velocity. Release monitoring removes the fear by making every deploy a known quantity — if something regresses, you know immediately.

Post-mortems keep landing on "we didn't know until..." If your incident retrospectives consistently identify detection speed as the failure point, the problem is not your team. It is your tooling.

Release monitoring is not optional for ecommerce teams shipping at pace

The frequency of ecommerce deployments has only increased. Between headless architecture, composable frontends, third-party script updates, platform patches, and A/B testing, a typical mid-market ecommerce site sees dozens of changes per week — any one of which could impact conversion.

The teams that ship confidently are not the ones that test more carefully. They are the ones that see more clearly. They know what every release did to their site because their monitoring is connected to their deployment pipeline, tied to ecommerce-specific signals, and framed in revenue impact — not just error counts.

That is what release monitoring for ecommerce is built for. And it is why teams at brands like Hanna Andersson, La Maisons Simons, ETAM Group, Giant Tiger, and David's Bridal rely on Noibu to close the gap between shipping code and knowing what it did.

"Noibu gives me the confidence to release faster because I know if something breaks, I'll be alerted — and I'll know exactly how to fix it."

— Yoav Shargil, CDO of David's Bridal

Related topics:

- How does ecommerce performance monitoring protect revenue during high-traffic events?

- What is digital experience analytics (DXA) for ecommerce?

- How to consolidate your ecommerce monitoring stack into a single platform

- Why ecommerce teams need 100% session capture, not sampled data

- How AI-powered issue prioritization cuts MTTR for ecommerce engineering teams

Stop learning about regressions from your customers

Every deploy should be a known quantity — not a moment of hope. Noibu's Release Monitoring connects your CI/CD pipeline to the ecommerce signals that matter: errors, performance, funnel behaviour, and revenue impact. So you know what every release did to your site before your customers do.

See what your last release actually did to your site.

Request a free website audit → | Explore Release Monitoring →

About Noibu

Noibu is an ecommerce analytics and monitoring platform that gives teams complete visibility into errors, performance, sessions, and digital experience — so issues and opportunities are found, prioritized, and acted on before customers feel the impact.